UPDATE 2022-10-21: Added update on 86Box’s porting status

One of the hobby projects I’ve been poking at again is written for DOS using Open Watcom C/C++ (v2 fork), and, being as averse to drudgework (and spoiled by modern tooling) as I am, I wanted some automated testing… so I wound up doing another blog-worthy survey of the field.

Unit Testing

Funny enough, there are actually several easy-to-use unit test frameworks that will build perfectly well with Open Watcom C/C++ v1.9 inside DOSBox… and that is the first test. There’s no point testing anything further if it’s not compatible in the first place.

TL;DR: greatest is the greatest.

greatest (ISC License) (My Recommendation)

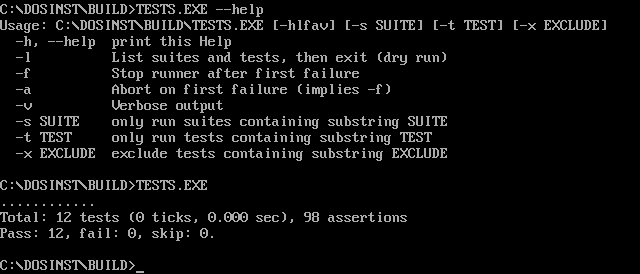

This is probably the best bang for your buck if you want a simple test harness for your DOS projects. Just drop greatest.h into your project, add a few GREATEST_MAIN_* macro invocations to your test .c file, and start using RUN_TEST or RUN_SUITE and ASSERT_* macros.

#include "greatest.h"

TEST test_func(/* arguments */) {

ASSERTm("Test string must not be empty", strlen(some_arg));

/* ... */

ASSERT(foo);

ASSERT_EQ(bar, other_arg);

/* ... */

ASSERT_STR_EQ(baz, some_arg);

PASS();

}

GREATEST_MAIN_DEFS();

int main(int argc, char **argv) {

GREATEST_MAIN_BEGIN();

RUN_TESTp(test_func, /* args */);

greatest_set_test_suffix("other_args");

RUN_TESTp(test_func, /* other args */);

/* ... */

GREATEST_MAIN_END();

}

It’s also surprisingly featureful for something so simple:

- It claims to work with any C89 compiler, does no dynamic allocation, and I had no problem building and running a real-mode executable from it inside DOSBox.

- It supports passing a userdata argument to a test function with

RUN_TEST1, so you can define your test once and then feed it various different inputs. - If you’ve got a compiler which supports the requisite C99 feature, you can use

RUN_TESTpto invoke a test function with an arbitrary number of arguments (And I did useRUN_TESTpin my real-mode test.exe) - It provides

SET_SETUPandSET_TEARDOWNmacros which accept userdata arguments. - It’ll handle

--helpfor you, but can also be invoked as a library for integration into a larger executable. - It’ll give you the familiar “one period per successful test, but verbose output for failing tests” output by default but there are also

contribscripts for colourizing the output or converting to TAP format. - It supports filtering which tests get run and listing registered tests from the command line.

- It supports macros to randomly shuffle the order of tests or test suites to reveal hidden data dependencies.

For something that works with Open Watcom C/C++ v1.9, the experience is surprisingly reminiscent of Python’s standard library unittest module.

While it lacks some “check this and show the values if they fail” assertions I would have preferred, such as greater/less than comparisons (ironic for a library named “greatest”), there’s a PR which would add them that you can grab instead and the author is considering them for the next version. (Version 1.5.0 added ASSERT_GT, ASSERT_GTE, ASSERT_LT, and ASSERT_LTE.)

The only wart I noticed is how the assertion macros interact with helper functions. (The assertion macros work by returning enum values, so helper functions which use them have to have a return type of enum greatest_test_res and you have to wrap calls to them in CALL() to conditionally propagate the return.) That said, that’s a minor problem and I am a fan of making good use of return values to avoid hidden control flow and unnecessary side-effects.

All in all, a very nice little harness to choose for retro-C projects… or for any C projects that don’t need something fancier, really.

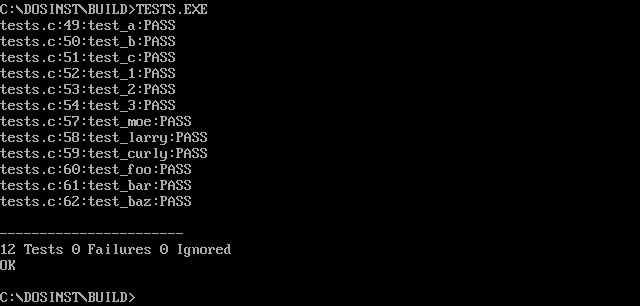

Unity (MIT License)

This is the next step up the complexity ladder but it’s primarily designed for embedded development, so its features are more poorly aligned for desktop development.

- It’s split into three files (two headers and a

.cfile) - It doesn’t handle argument parsing (No

--help, no test filtering) - The introductory documentation has a lot of “Here’s how to do this with microcontrollers and your favourite non-Watcom build system” content that can get you side-tracked if you’re not careful.

- Because it’s designed to make microcontrollers first-class citizens, it’s got a lot of

ASSERTmacros which are just more specialized versions of what greatest offers. (eg.TEST_ASSERT_EQUAL_HEX8) - It expects you to have one setup and teardown function per file, rather than providing a macro to register them with arguments to pass in, so you may have more code duplication.

- I couldn’t find any evidence that it provides an equivalent to greater’s

RUN_TESTpfor calling a template function with varying arguments. - The assertion macros are more verbose. (

TEST_ASSERT_TRUE_MESSAGEinstead ofTEST_ASSERTm) - Its default configuration depends on more of the standard library, so I had to remove some of my compiler flags intended for size optimization to get it to build.

Going from greatest to this reminds me of going from POSIX to Java or Windows APIs.

On the plus side, it does have some advantages:

- It comes with a lot of documentation, including a printable cheat sheet for the assert macros.

- If you don’t mind the lack of “develop DOS software on DOS” purity, it includes some ruby scripts to generate test boilerplate for you.

- It has a few “automatically pretty-print on failure” assertions that greater is missing, like array and bitfield equality tests.

…but still no less/greater than pretty-print macros!

Once you ignore the flood of details in the introduction that are irrelevant to DOS retro-hobby development, it becomes pretty clear that writing a Unity test suite is almost identical to writing a greatest test suite.

Drop the three Unity files somewhere your compiler can find them, tweak your makefile to also build and link unity.c, and then write a little test program:

#include "unity.h"

void setUp(void) {} /* Required or it'll fail to link */

void tearDown(void) {} /* Required or it'll fail to link */

void test_a(void) {

TEST_ASSERT_TRUE_MESSAGE(strlen(some_arg), "Test string must not be empty");

/* ... */

TEST_ASSERT_TRUE(foo);

TEST_ASSERT_EQUAL_UINT(bar, other_arg);

/* ... */

TEST_ASSERT_EQUAL_STRING(baz, some_arg);

}

int main(void) {

UNITY_BEGIN();

RUN_TEST(test_a);

/* ... */

return UNITY_END();

}

Final verdict: It’s certainly nicer than JTN002 – MinUnit (Heck, I could write something better than that) and it’s probably the best choice for testing embedded C, but it’s not for DOS retro-computing.

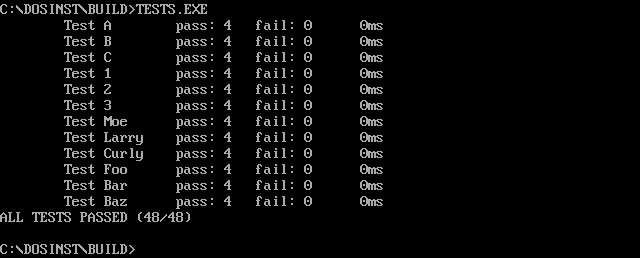

For the most part, this is unarguably a worse choice than the previous two options, having fewer assertion types than either, less supporting documentation, no support for parameterized tests, and no helper for command-line options… but it does do two things which I think are valuable:

- It shows pass/fail counts for individual assertions within a test case.

- It lets you easily and obviously set custom display names for tests.

Again, it’s a fairly simple API. One minctest.h file to include, and then you write tests like this:

#include "minctest.h"

void test_a() {

lok(1 == 1);

/* ... */

lsequal("foo", "foo");

/* ... */

lequal(2, 2);

lfequal(3.0, 3.0);

}

int main(int argc, char *argv[]) {

lrun("Test A", test_a);

/* ... */

lresults();

return lfails != 0;

}

In the end, it’s not something I’d use for anything when greatest and Unity exist, but it’s a good data point for test suite UI design.

Others…

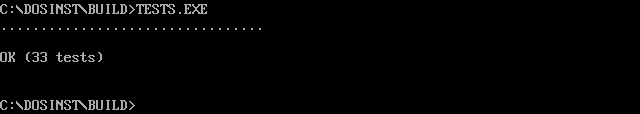

CuTest (zlib/libpng License)

CuTest (zlib/libpng License) does pass my initial triage question of “Does this cross-build from the Linux version of Open Watcom C/C++ 1.9 and run as a real-mode EXE under DOSBox?” …but that’s it.

First, it’s the only option that worked, but failed with a Stack Overflow error until I raised Open Watcom’s notoriously small default stack size with the OPTION STACK linker directive.

Second, and most damningly, the documentation and distribution archive front-load too much complexity… especially when, from what I can tell, it still produces a worse overall experience than greatest.

For that reason, I only ran the example provided in cutest-1.5.zip and this is what it looks like in DOSBox:

I checked µnit, µTest, utest.h, FCTX, and siu’s MinUnit but they all failed the “Will the distributed source build for real-mode DOS with Open Watcom 1.9 without patching?” test.

I also checked Labrat, CUnit, Check, Embedded Unit, and cmocka, but they were even more complex than CuTest to get going, so I didn’t even bother checking whether they would compile.

(It also doesn’t help that some of these rejected options are under the Unlicense, which multiple parties have criticized, and which doesn’t reliably account for “when in doubt, protect the rightsholder from their own ignorance” provisions in jurisdictions like Germany the way CC0 would. See this review of the CC0 for details.)

Snapshot Testing

If you’re testing something like a wrapper for the int 10h Video BIOS APIs, the simplest way to assert the correct results is:

- Clear the screen

- Assert that the screen buffer is in the expected state

- Do some drawing

- Check that screen buffer’s contents match a saved copy of what it should look like

Not only does this allow testing on real hardware without setting up some kind of fancy VGA capture setup, it also makes the test more generally applicable.

- There are rendering differences between VGA and earlier color graphics adapters, but, because EGA and VGA achieve the default 80×25 color text mode by emulating CGA text mode, the data your test reads out of the screen buffer will be the same regardless.

- DOSBox uses different fonts when you switch the

machine=setting between different graphics adapters. - Screenshotting is affected by things like the

aspectandscalersettings in your DOSBox configuration. - Taking a screenshot of an emulator window requires different APIs on different host platforms if the emulator wasn’t specifically built to be scriptable in that way.

- Comparing VGA output on real hardware is even more difficult because producing and then capturing VGA output is a digital-to-analog-to-digital conversion.

On the other hand, reading the screen buffer is just a matter of synthesizing a pointer to the right memory address, and then reading out the correct number of bytes. (For CGA text mode or an emulation of it like EGA and VGA do, the address is B800:0000 and, for the default 80×25 text mode, the length is 4000 bytes.)

Doing it this way allows you to run your testing entirely within DOS by writing a simple harness around your code which sets up the screen before and dumps the memory to file after, ready to be compared like any other “Are these null-containing buffers equal?” check. (Which means you can easily run the test in any DOS environment. Great for portability testing.)

I haven’t had time to try this yet, but, if you’re running on EGA or later, where 80×25 text mode has access to four different display pages, it may even be possible to do it right inside one of the unit test runners I examined above without interfering with the test runner output, by switching away from the default page before running the test and back after to prevent it from erasing the runner’s status output.

(The main thing I need to check is whether B0000h gets remapped to the active page. If it doesn’t, then the utility of using page flipping to run the snapshot tests inside the unit test hardness would be limited to int 10h APIs that follow the active page.)

I’ll probably put up some sample code once I get to writing this part of the testing infrastructure for my project.

Functional Testing

Concept

Functional testing for a DOS program is complicated, because there is so little abstraction from the underlying platform. In fact, I think that, in the general case, it’s only worth the trouble if you run the test driver outside the DOS system under test.

This means one of two things:

- Run the program under test in an emulator, with the functional test harness running on the host operating system.

- Run the program under test on real hardware, with the functional test harness running on another machine.

To support both cases, and to avoid the needless complexity and potential for mistakes that would come from using a TSR, the solution I’ve chosen is to instrument the program to be tested with a simple serial console. Then, the same mechanism can work with real DOS machines (just use a null modem cable and, if your development machine is modern, a USB-Serial adapter) or with any DOS emulator that provides a means to connect an emulated serial port to the host system.

The serial console needn’t be complicated. Just log messages which the test harness can assert for correctness, and add a means to substitute input that would usually be provided by the user.

For additional testing, I’ll probably implement some kind of way to have the program under test stop at a machine-readable prompt to continue, so the harness can deterministically capture screenshots and complement the snapshot unit testing above with snapshot integration testing.

With DOSBox, it’s also easy for the test harness to assert for the presence, absence, and/or contents of on-disk files without having to mount or otherwise interact with an emulator’s disk images.

Research

The emulators which look to be capable of bidirectional COM port redirection to the host OS include DOSBox, QEMU, VirtualBox, and Bochs.

PCjs has some kind of serial console support but I haven’t had time to figure out whether it can be exposed to a separate process in the way I need.

I haven’t been in a hurry to investigate DOSEMU compatibility, since it’s more like Wine for DOS programs than a full-blown emulator and, aside from being especially thorough in my compatibility testing, I don’t see testing in it gaining anything if I’m already testing with DOSBox.

Either way, DOSBox should do well for quick and dirty testing, akin to testing a Windows program under Wine, and QEMU or VirtualBox should work for testing real DOS on fake hardware, but testing how software interacts with quirky hardware is another story.

Unfortunately, I’m still looking for a way to make this work with the emulators most suitable for that. PCem and its derivatives, 86Box and VARCem, are currently the state of the art in trying to accurately reproduce vintage hardware via emulation, but none of them have serial port pass-through support.

- PCem’s developer has stated a lack of time to implement serial port pass-through but is willing to accept patches. It supports emulating an NE2000 network adaptor, but the only TSR I’ve yet found for redirecting COM ports over a network socket is paid proprietary software. (Though this TSR may be something I can MacGyver into doing the job. If I can, I’ll have to contact the author to ask for clarification on the license though.)

86Box was forked before the frontend was rewritten to be cross-platform, the developers are still soliciting an experienced contributor to port over PCem’s new SDL+wxWidgets frontend, and I don’t know if it was forked before or after the NE2000 support landed.86Box is now cross-platform and easily available to Linux users on Flathub. Its SLiRP networking option functions equivalently to the NAT option in VirtualBox and supports emulating 12 vintage NICs including the NE2000.- VARCem doesn’t offer non-Windows binaries and it was apparently forked from 86Box, so all the same caveats probably apply.

Finding any information on whether serial support has been added to 86Box or VARCem since the fork is complicated by how PCem-lineage emulators provide dummy/unconnected serial and parallel ports to emulated OSes to ensure that the observed behaviour of the hardware for a selected system is 100% accurate.

So far, my best hope for testing handling of hardware quirks is that a half-functional test harness could be rigged up by running 86Box inside Wine (assuming it works in Wine), using its LPT-to-file support and inotify to handle sending data from the program under test to the harness (assuming 86Box doesn’t force block-wise buffering on it), and using XTestFakeKeyEvent to send control inputs from the harness to the program under test. (Obviously, this would only work on X11-based desktops capable of using Wine to run x86-based Windows applications.)

Whatever I come up with, if it’s easy enough to generalize, I hope to release it as a reusable test framework once I’ve got something useful.

Automated Testing for Open Watcom C/C++ and DOS by Stephan Sokolow is licensed under a Creative Commons Attribution-ShareAlike 4.0 International License.

Automated Testing for Open Watcom C/C++ and DOS by Stephan Sokolow is licensed under a Creative Commons Attribution-ShareAlike 4.0 International License.

I am so glad I stumbled upon this post. I wasn’t even looking for anything testing related instead I was looking for how to write an error handler in Watcom C (i’m new to it.)

I am writing a text mode library as a way of learning C programming for MS-DOS so I can tinker with a 8086 computer I recently added to my collection. I have bookmarked in my notes this page as I will be revisiting it once I have finished my library.

It is genuinely nice to see articles like this still being written.

I’m happy to hear that. Seeing proof that I’m not just writing this stuff as reminders to future me is the best reward.

What kind of error handler are you trying to write? I’m a novice with Watcom C, but I have enough abstract knowledge from other programming languages and areas of interest that I may still be able to help.